Big data is defined as data that is too big, fast & hard for existing tools to process. Here, “too big” means that now a days organizations have to deal with petabyte scale collections of data which either come from click streams, transaction records, sensors and many more . “Too fast” means that data is not only big but it needs to be processed quickly. For example, to perform fraud detection at a point of sale, or determine what ad to show to a user on a web page. “Too hard” for data means that it doesn’t fit neatly into an existing processing tool i.e. data needs more complex analysis tools than the present existing ones.

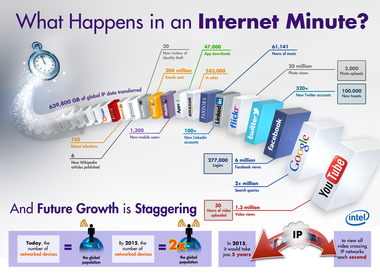

Big Data means massive volumes of information, IBM reports that over 2.7 zetabytes of data exist in the digital universe today with over 571 new websites being created per minute per day. Google is a source of ‘big data’ In 2008, Google already processed 20,000 terabytes of data (20 petabytes) a day.

Big Data means massive volumes of information, IBM reports that over 2.7 zetabytes of data exist in the digital universe today with over 571 new websites being created per minute per day. Google is a source of ‘big data’ In 2008, Google already processed 20,000 terabytes of data (20 petabytes) a day.

RSS Feed

RSS Feed